Tutorials¶

Step-by-step guides for common tasks.

| Tutorial | What You'll Build |

|---|---|

| Reproducing the Paper | Map SSRN paper claims to running code |

| Adding Data Sources | Add a new ETL extractor |

| API Examples | Query the REST API with curl and Python |

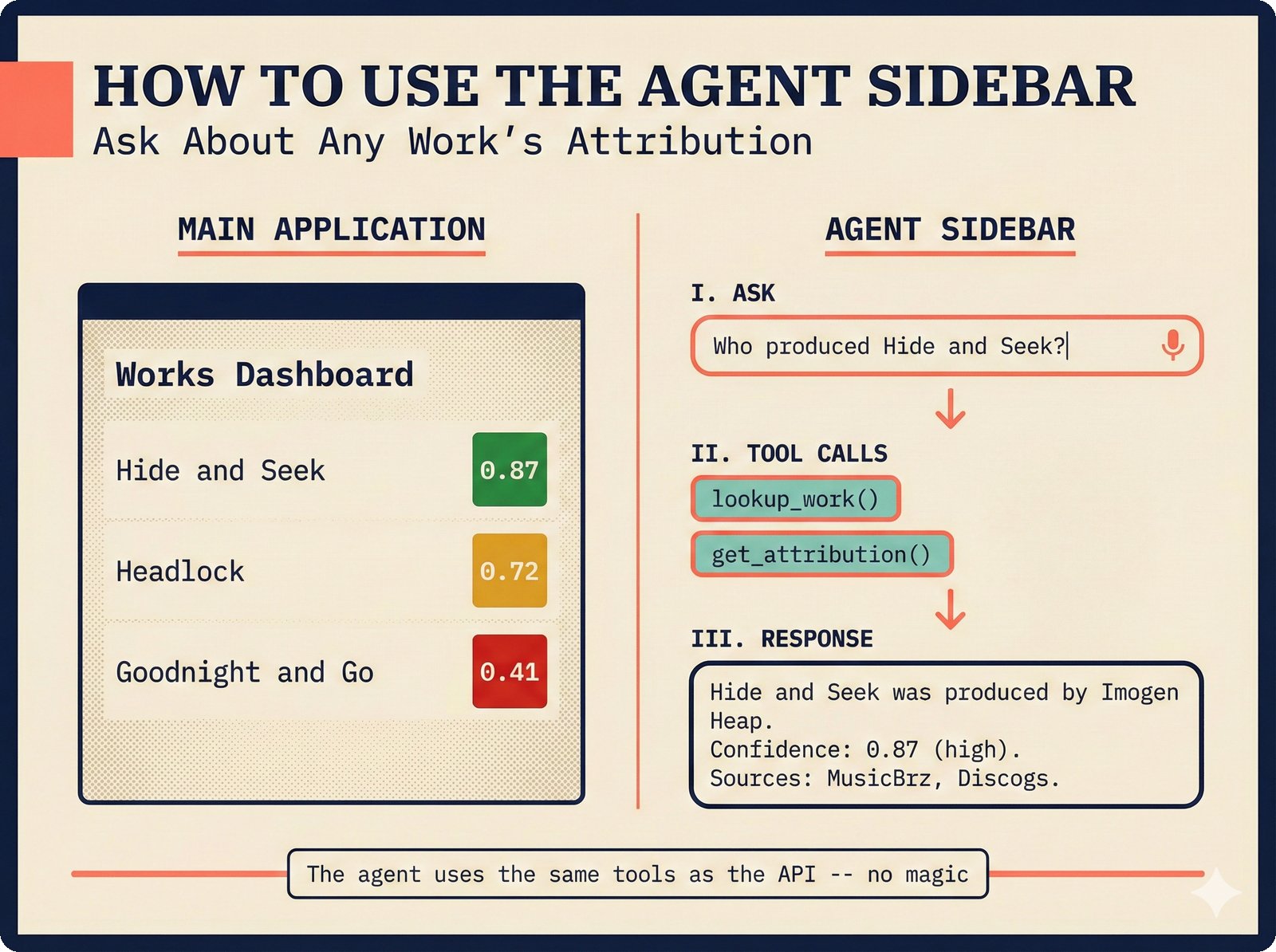

Using the Agent Sidebar¶

Agent sidebar interaction flow for the Music Attribution Scaffold. Non-technical users query music credits in natural language while the agent transparently invokes the same tools as the REST API, returning confidence-scored attribution data without requiring API knowledge (Teikari, 2026).

The CopilotKit sidebar lets you query attribution data using natural language. Type a question like "Who produced Hide and Seek?" and the agent calls the right tools behind the scenes -- lookup_work(), get_attribution() -- to return a confidence-scored answer with source provenance. Requires ANTHROPIC_API_KEY in your environment. See API Examples for the agent SSE endpoint details.

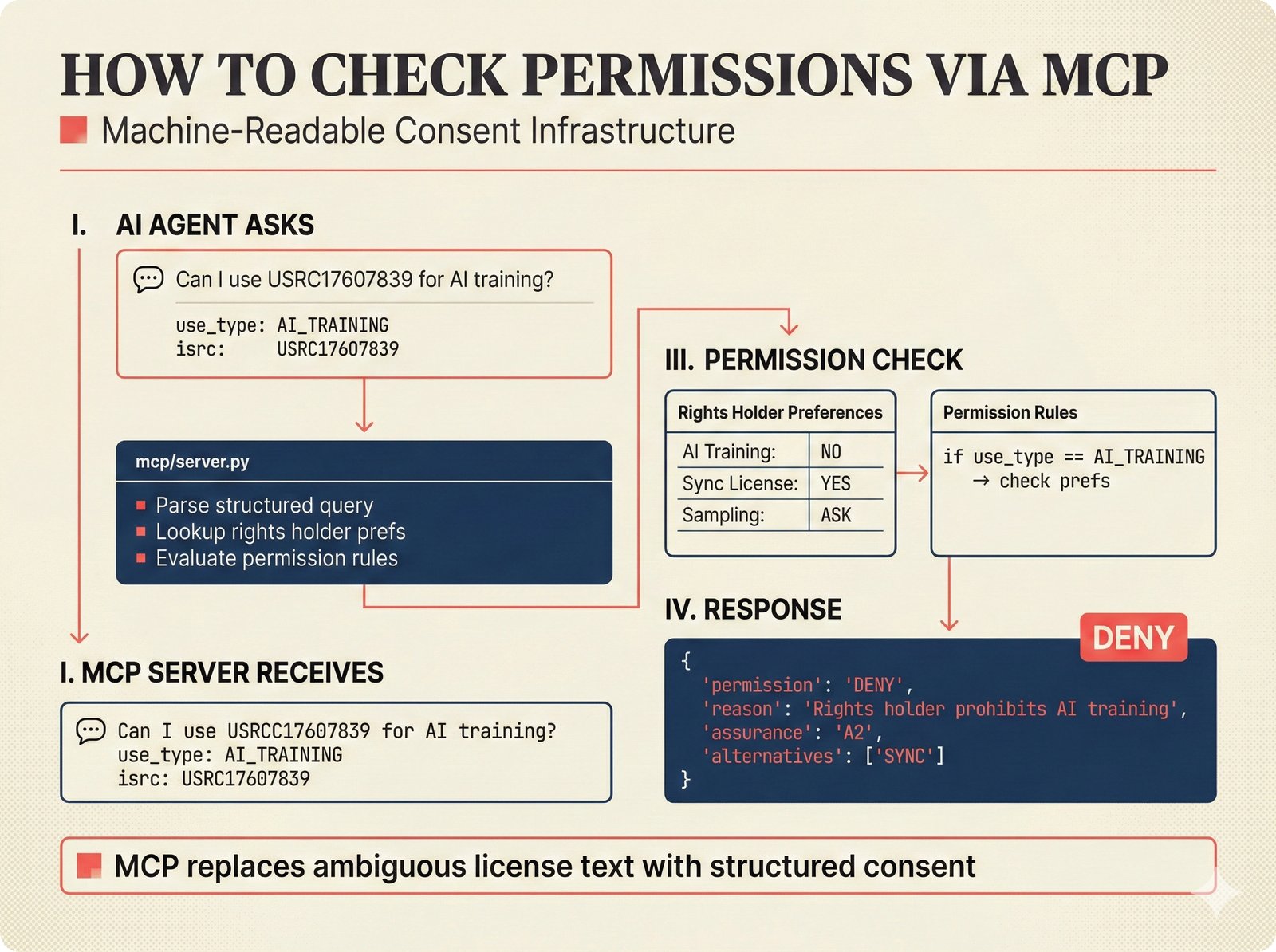

Checking Permissions via MCP¶

MCP consent infrastructure workflow for the Music Attribution Scaffold. An AI agent submits a structured permission query, the MCP server evaluates it against declared rights holder preferences, and returns an explicit decision with provenance -- embodying the paper's principle that consent must be machine-readable, not buried in license text (Teikari, 2026).

The MCP (Model Context Protocol) server turns ambiguous licensing into machine-readable permission checks. An AI agent asks a structured question like "Can I use this track for AI training?", the MCP server evaluates the rights holder's declared permissions, and returns an explicit ALLOW or DENY with provenance. See API Examples -- Check Permissions for curl and Python examples.

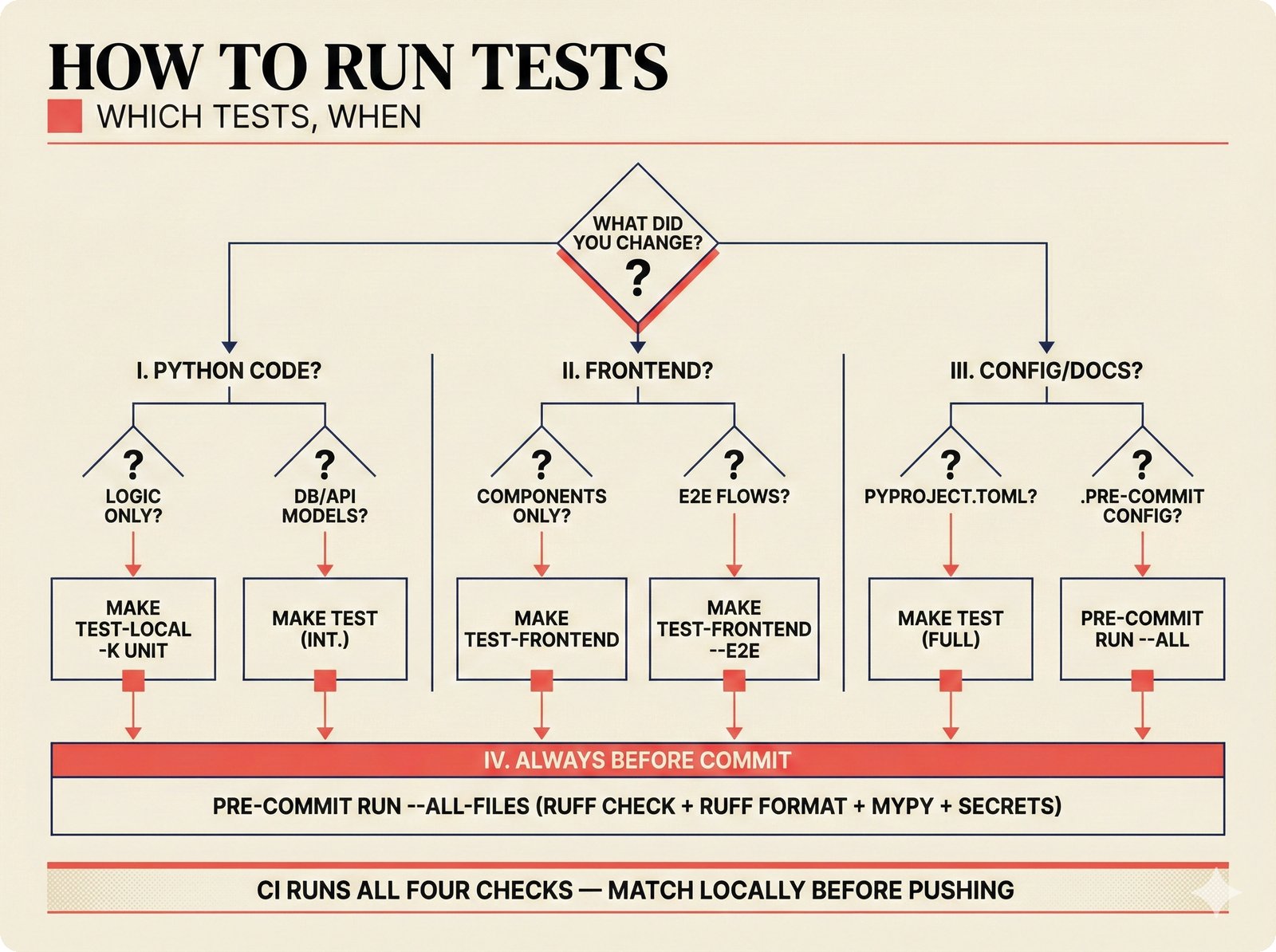

Running Tests¶

Test selection decision tree for the Music Attribution Scaffold. Contributors follow branching paths based on what they changed -- Python logic, database models, frontend components, or configuration -- to run the minimal necessary test suite, with pre-commit hooks as a universal quality gate before every commit (Teikari, 2026).

Choose the right test suite based on what you changed: Python backend changes need make test-local (unit) or make test (integration), frontend changes need make test-frontend, and all changes must pass pre-commit run --all-files before any commit. See Troubleshooting if tests fail unexpectedly.

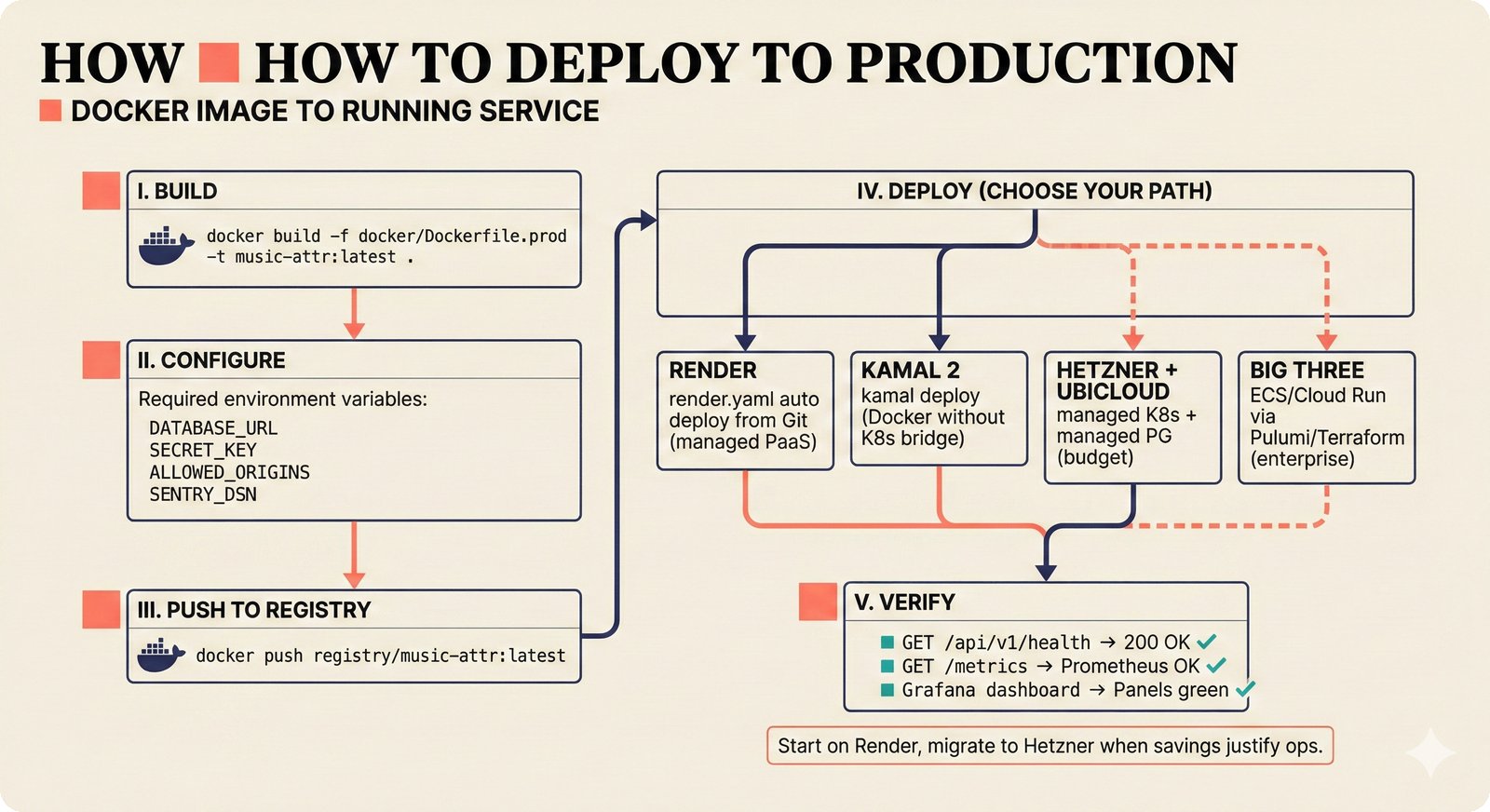

Deploying to Production¶

Production deployment pipeline for the Music Attribution Scaffold. Four deployment paths reflect PRD v2.1.0: Render for PaaS simplicity, Kamal 2 for Docker-without-K8s, Hetzner+Ubicloud for managed infrastructure at budget prices, and Big Three for enterprise compliance -- all converging on the same health and metrics verification endpoints (Teikari, 2026).

The scaffold ships as a production-ready Docker image with Prometheus metrics and health checks. Deployment follows five steps: build, configure, push, deploy (choose your path), and verify. Four paths are supported -- from Render PaaS to Hetzner bare-metal -- reflecting the scaffold philosophy that no single deployment path is "correct."

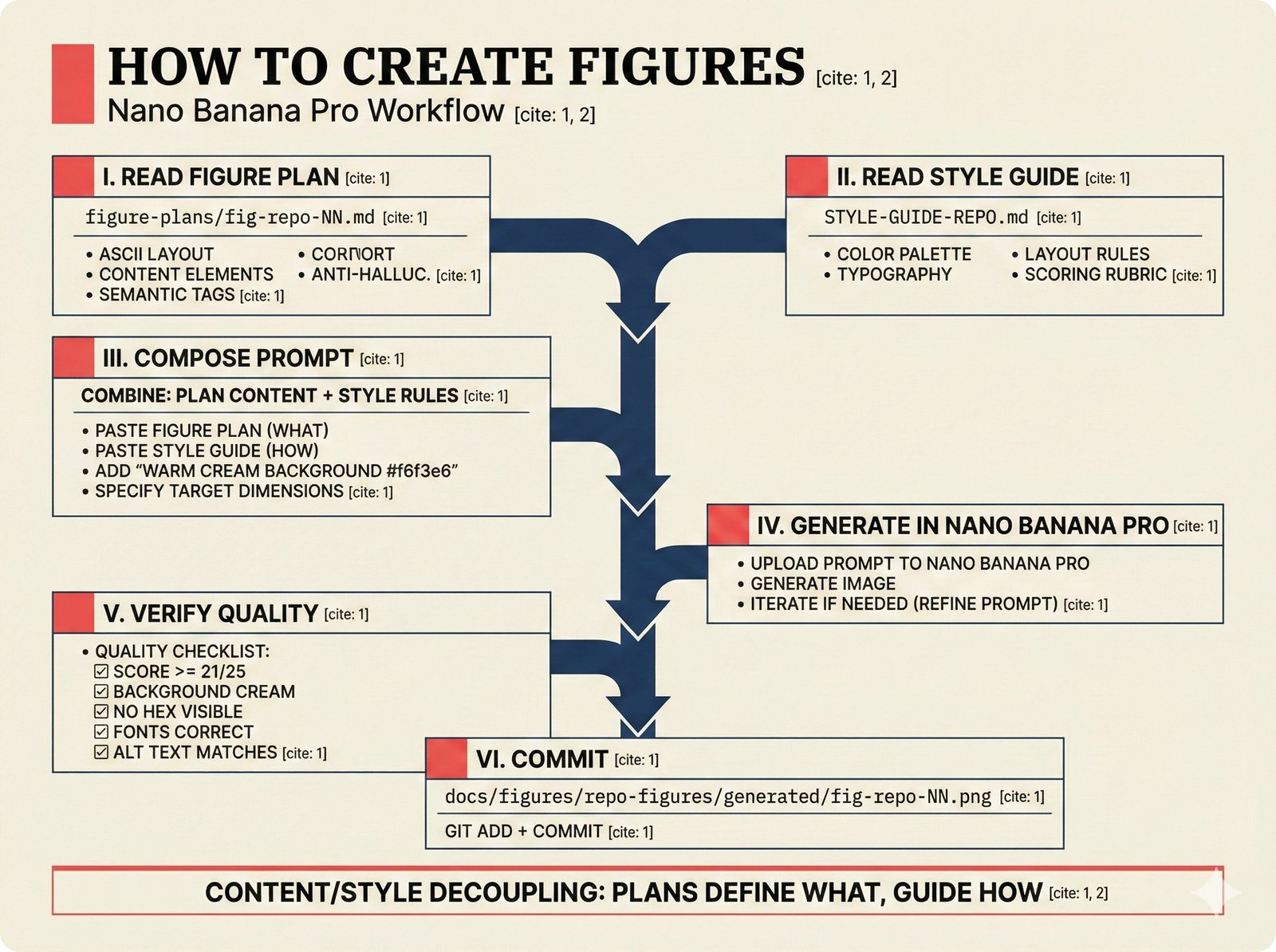

Creating Figures with Nano Banana Pro¶

Figure creation workflow for the Music Attribution Scaffold. The content-style decoupling principle separates semantic figure plans (what to communicate) from the visual style guide (how it should look), enabling reproducible, quality-gated figure generation via Nano Banana Pro with a minimum score threshold of 21/25 (Teikari, 2026).

Figure creation follows a six-step pipeline: read the figure plan, load the style guide, compose the generation prompt, generate in Nano Banana Pro, verify against the quality checklist (score >= 21/25), and commit to the repo. Plans define WHAT (content), the style guide defines HOW (visual). See docs/figures/repo-figures/figure-plans/ for all plan files and STYLE-GUIDE-REPO.md for the visual specification.

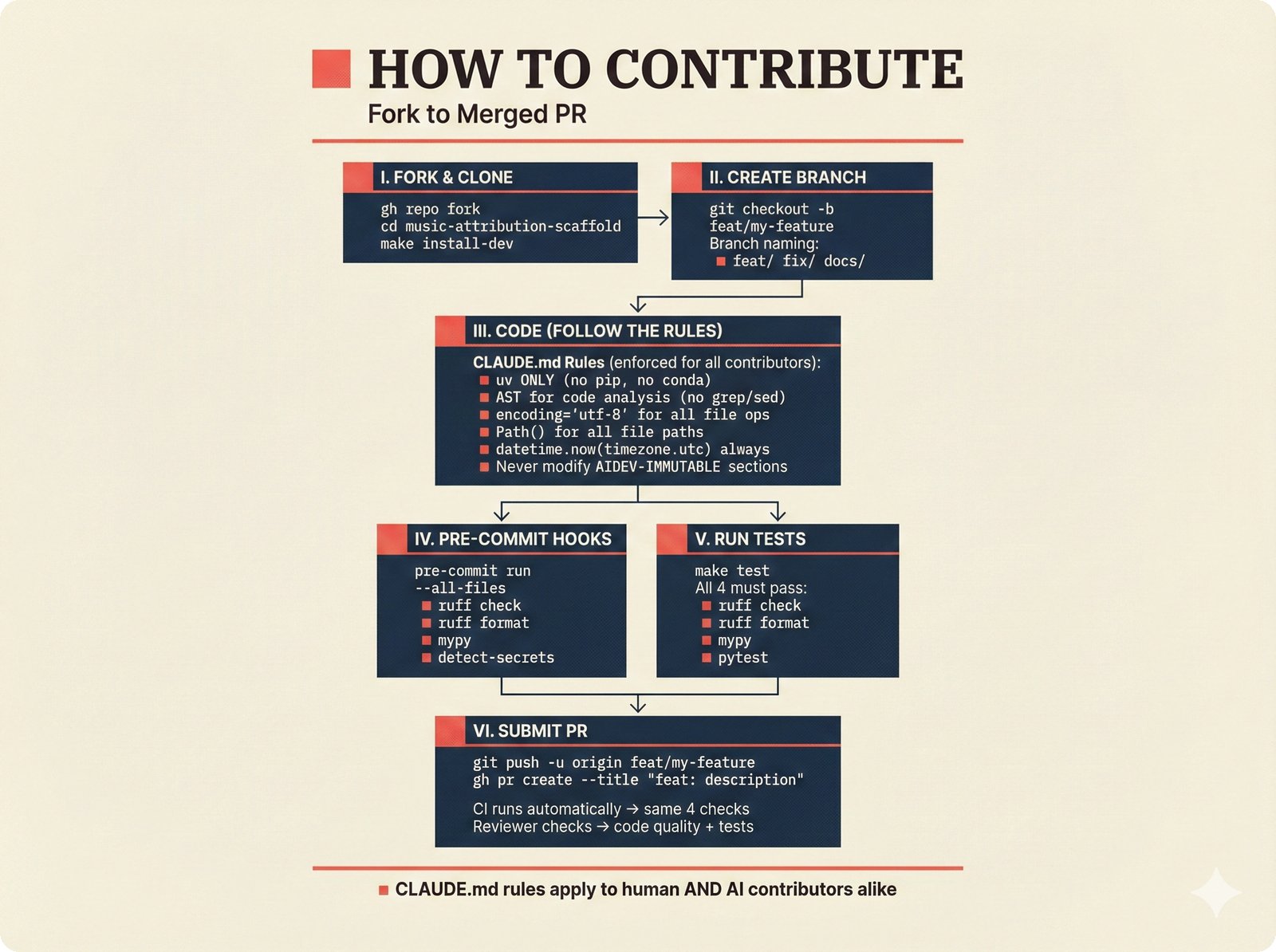

How to Contribute¶

Contribution workflow for the Music Attribution Scaffold. The CLAUDE.md behavioral contract governs both human and AI contributors equally, with four mandatory quality gates (ruff check, ruff format, mypy, pytest) ensuring that every pull request meets the project's standards for code quality and attribution correctness (Teikari, 2026).

Every contribution follows a six-step path: fork, branch, code (following CLAUDE.md rules), pass pre-commit hooks, pass tests, and submit a PR. The quality gates are non-negotiable -- ruff check, ruff format --check, mypy, and pytest must all pass before any PR is reviewed. Run make install-dev after cloning to set up the development environment.